Thinking about how we think about machines that think

Google’s Deep Dream interprets the human brain.

Image: ‘Healthy adult human brain viewed from the side, deep dream’ by Henrietta Howells, NatBrainLab. Credit: Henrietta Howells, NatBrainLab. CC BY

How can creative thinking widen the scope of possibility for the artificial intelligences we develop? A look at the evolution of thought around AI that challenges us to think beyond our cultural biases.

As a futures thinker, I’m less interested in the generation of prediction than I am in the generation of possibility. Lately I’ve become interested in how genuinely creative and expansive applications of thought might allow us to imagine artificial intelligences differently. I love the idea of creativity and AI existing in the one sentence: the explicitly non-rational, intuitive and decidedly elusive mode of creativity entering into play with the logical, rational project of AI - dedicated to the mechanisation of thought and the translation of human cognition into math (and eventually information and data flows). The phenomenon of creativity eludes quantification, refusing to fit neatly within the logical positivistic framework which laid the groundwork for much of our thinking about AI today.

Check your worldview: thinking multi-ontologically

We talk about the benefits of multilingualism for the brain, there is evidence to suggest that multiculturalism aids creativity, but what of multi-ontology - engagement with multiple ways of being, notions of existence and different understandings of reality? I feel lucky to have grown up in what I can only describe as a multilingual, multicultural and multi-ontological home. With a father who is a Chinese Medical practitioner and researcher in philosophy of science, the fairly exotic metaphysical concepts of ontology (enquiry into the nature of being) and epistemology (study of the nature of knowing) managed to pop up in the most domestic of spaces - conversations around the dinner table, or out on long holiday drives. My mother, an inspired feminist who has always been one to challenge a gender norm, was responsible for giving me my first electronics breadboards and components kit. With my family adopting a first-generation Apple Macintosh Plus, information flow, electrical flow and Qi (Chinese concept of vital energy) flow became equally valid concepts for understanding how stuff worked in our household. I was brought up to think about information processing, electronics and Taoist philosophy in mutually inclusive terms! It has always occurred to me as natural that there are multiple ways of being and knowing in the world, and it’s in this spirit that I’d like to invite us to think about machines that think.

As someone who plays at the messy fringes of art, technology and wider culture I’m curious about how we think about artificial forms of intelligence, and how certain types of thinking have shaped the way AI has evolved into our present. Our society has a tendency to talk about technology in a way that frames it as the sole driver of future change. I prefer to think about technology as a subset of culture, originating from and inextricably intertwined with the values, beliefs and worldviews which have fostered it. Rather than shaping the world entirely on its own, I see technology - whether manifest as a washing machine or machine learning platform - as engaged more in a dance with wider culture. Both inform and shape each other in turn, bounded only by the environmental and material limits of their time and place.

Emerging technologies never just herald an emerging future, they are artefacts that speak across a continuum of time, as eloquently to their pasts as to futures imagined. Each bears a memetic link to its past(s) through the legacies of thought which shaped its predecessors. AI has a particularly long history - in Western culture the idea of machines that think and automatons can be traced as far back to the mythology of Ancient Greece. Cultural narratives and stories that are embedded deep in our cultures can resurface from time to time, or may be persistently unfurling - deep, unwavering intonations beneath the high frequency sound and fury of tech trends and future ‘predictions’. A bit of historical analysis and digging can often show us that the ‘future’ was with us all along.

A depiction of Talos, a giant bronze automaton and protector of the Island of Crete created by the Olympian god Hephaestus. Detail from an Athenian volute-crater, c. 400-390 BC. Ruvo, Sammlung Jatta Inv. 1501 © Sammlung Jatta, Ruvo

I think mechanistically, therefore I am (mechanical?)

So what have been the philosophies and worldviews that have contributed to our thinking about artificial intelligence? How might our cultural understandings of mind and consciousness have informed the way in which we’ve conceived of ‘intelligent systems’, and machines that think? The evolution of Western Science and has long been intertwined with the evolution of Western Philosophy, and Computer Science is no exception with its own cultural and philosophical underpinnings.

The idea that we can replicate or duplicate ourselves, our intelligences or even create new consciousness is a pretty wild one, which still elicits a strong array of reactions, despite the longevity of the idea. The quest to replicate human cognition and ‘intelligence’ has both intrigued and terrified us for generations; evoking a push-pull relationship to the concept, both attractive and repellent. As described by Pamela McCorduck (2004) in her excellent cultural history of artificial intelligence Machines Who Think , the lineage of Western philosophical thought which has shaped the way we’ve imagined and consequently developed AI, tells a long history of attempts to mechanise thinking.

The emergence of Logic and a vision of human thought as rational and capable of codification by machine can be traced back to Ancient Greece. Ray Kurzweil in his first book The Age of Intelligent Machines (1990) makes special reference to Plato noting that he “saw clearly the relationship of human thought to the rational processes of a machine.” These early inklings of the possibilities inherent in the mechanization of thought were driven by a belief that human thought followed natural laws, those being governed by an “essentially logical process” (Kurzweil,1990). Aristotle introduced the first formal studies of logic, and introduced the first deductive reasoning system - syllogistic logic in the 5th Century BCE. (McCorduck, 2004). Logic would evolve to bridge the fields of philosophy and mathematics, and eventually form the foundation of computer science and code.

Another major theme in Western philosophy to create the intellectual conditions for, and shape collective imagining of artificial intelligence was the emergence of mechanistic thinking, and the codification of Cartesian dualism within dominant Western thought. Taking place amidst the backdrop of socio-cultural change taking place during the Renaissance, gaining momentum within Europe’s Scientific Revolution and informing the ‘Age of Enlightenment’, mechanistic thought became synonymous with ‘modernity’. In the early 1600’s Rene Descartes published his Treatise on Man, declaring mind and body to be separate entities, advocating for the primacy of human mind (he was later to write ‘I think, therefore I am’). Descartes’ philosophical cleaving of mind and body was to have significant and long-ranging implications for Western thought, with the mind-body distinction becoming hardwired into the subsequent work of many thinkers.

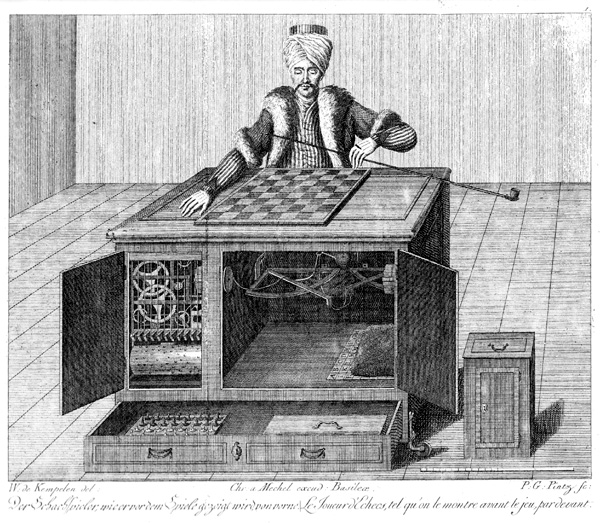

Automatons such as Von Kemplen’s infamous one were being exhibited throughout Europe in Descartes’ time. Image: ‘Wolfgang Von Kempelen – The Turkish Chess Player’, by Karl Gottlieb von Windisch [Public domain]

The 15th and 16th century saw the arrival of mechanical clocks in Europe, which McCorduck (2004) notes were “the first modern measuring machines”, she also observes that at the time of writing his Treatise, automata were being exhibited through Europe, an influence no doubt on Descartes’ thinking. For Descartes, human acts could be divided into the mechanical and the rational. Rationality being the preserve of mind, Descartes declared the body mechanical, to “be considered as a kind of machine, so made up and composed of bones, nerves, muscles, veins, blood and skin, that although were in it no mind, it would still exhibit the same motions which it at present manifests.” (Descartes 1824, as cited in Porush, 1985) Newtonian Mechanics would further serve as inspiration for rational, mechanistic thinking with Isaac Newton writing in Principa “ I wish we could derive the rest of the phenomena by Nature by the same kind of reasoning from mechanical principles…” (McCorduck , 2004) The idea that the world, human mind included could be deduced through a rational set of laws or logics was formalised, paving the way for the evolution of modern science and the European Scientific Revolution.

Logic, maths, computing and the rise of the brain

Within the 19th and 20th centuries, links between logic and mathematics began to be made, and eventually these connections were expanded to mathematics and computing. In 1910 Bertrand Russell and Alfred North Whitehead published their Principe Mathematica which sought to present rules of inference and logic by which all mathematical truths could be proven. 16 years earlier, George Boole had published An Investigation of the Laws of Thought (1854), stating that “The laws we have to examine are the laws of one of the most important of our mental faculties. The mathematics we have to construct are the mathematics of the human intellect.” (McCorduck, 2004) Creating an algebraic system of logic, Boolean Logic was born, giving rise to the binary numbering system and laying ground for the evolution of computing.

This history tells a story of rationality, and the correlation of reason with the human mind. However it’s interesting to observe within this history the shift which allowed the idea of ‘mind’ to become equated with that of ‘brain’ or brain functioning. Computing pioneer Alan Turing in his paper Intelligent Machinery (1969) discusses a range of ways by which a machine “might be made to show intelligent behaviour” using as “guiding principle” the human brain as an analogy. This equation of mind with brain function was applied literally in the groundbreaking work of Walter Pitts and Warren McCulloch, who defined human thought as a net of interconnected neurons. Establishing the first computational theory of mind, Pitts and McCulloch proposed neural nets or mathematical models of nerve behaviour, marking a significant shift towards conceiving of ‘mind’ and the laws of mind as sitting within the realm of information technology. Today, the correlation between cognition and information processing seems obvious, however before these developments in thought computation hadn’t been seen as an obvious choice for developing artificially intelligent systems. It was around this time that McCorduck (2004) notes the computer began to be referred to as the “thinking machine”.

Thinking too narrow? Think cross-culturally

The earliest foundations of thought that contributed to computing and AI can’t be discounted - they represent fascinating developments in philosophy, and complex interactions between culture, philosophy, and the sciences. From this thinking has emerged an entire Information Age and the evolution of this thought has led to the development of increasingly complex forms of artificial intelligence. That said, the thinking which has shaped these technologies and which stand to shape our world, notions of self, and human relations, are distinctively reductionistic and representative of a very particular branch of Western philosophical thought. Here we see the dominance of particular philosophies with histories centred around Europe and North America; this is thinking that could be described as parochial, were it not for the global reach this narrow band of thought has achieved. You may have noticed that the characters that populate the historical narrative above are exclusively male and white with the thinking of women and of people of colour excruciatingly absent. I believe that if we’re to engage in genuinely creative approaches to artificial forms of intelligence, we should at least start with some expanded notions and theories of mind, and bring some open, self-reflexive thinking to how our own culturally conditioned worldviews are likely to predispose us to certain ideas on the nature of ‘being’ and ‘knowing’.

The recently published Taking Back Philosophy: A Multicultural Manifesto by comparative philosopher Bryan W Van Norden, argues that a narrow-minded and xenophobic culture within Western philosophy schools has led to the extensive philosophies of China, India, Africa and of Indigenous peoples being marginalised, or at worst completely ignored. Van Norden sees this as a form of ‘Orientalism’ - another manifestation of dualist thinking which posits everything “from the Middle East to Japan” as “Oriental”, i.e. “irrational, depraved, childlike, ‘different’”; making the European “rational, virtuous, mature, ‘normal’”. What compounds the omission of these schools of thought is the fact that the world’s earliest centres of academic learning emerged in Asia and Africa, predating the major European universities founded in Medieval times. From Nalanda - India’s ancient university and Buddhist centre of learning founded in 5th Century BCE, known for its systematic study of logic, epistemology and metaphysics (Thompson, 2015) to the University of Quaraouiyine in Fez, Morocco founded in 859 and still in operation - the world’s oldest existing and continuously operating university, there is undeniable knowledge and thought in existence outside of the Anglo-European which has potential to contribute greatly to our concepts of mind, consciousness and intelligence. According to comparative philosopher Evan Thompson (2015), the earliest maps of consciousness emerged from the Indian tradition of philosophy. The Upanishads, key Vedic texts meticulously detailed and categorised different states of consciousness, in the 6-7th Centuries BCE. To dismiss such significant theories of mind in the discussion around cognition and consciousness represents a major oversight.

A visual depiction of the ‘buddha-mind’ of the deity Vajrabhairava from the Tibetan Vajrayana / Tantric Buddhist tradition. ‘Attributes of rDo-rje ‘Jigs-byed (Vajrabhairava,’ . Credit: Wellcome Collection. CC BY

What could genuinely creative thinking about mind, intelligence and consciousness look like?

“I believe the automatic assumption in cognitive neuroscience that brain and mind are invariably two sides of the same activity limits the scope of scientific enquiry. That assumption means that science looks for its answers only within an arbitrarily limited framework. With so many new developments and discoveries in brain science, perhaps scientists might break out of this paradigm and expand the parameters brain science has set for itself.”

Dalai Lama, ‘On the Luminosity of Mind’, New Scientist, 2003

Theories of creativity often focus on the role of expansiveness of thought; the ability to explore a challenge or problem expansively is often described as a precursor to creative activity. Theories of creative process, including design thinking (which has sought to systematise creative problem-solving), emphasise the tensions between divergence and convergence of thought, idea generation and selection, and moving between openness and criticality (Bocchi, et al., 2014) So how do we achieve genuinely divergent thinking when it comes to thinking about mind, intelligence and consciousness of both the human and artificial variety? How might one break out of the paradigms and parameters the Dalai Lama mentions?

I see a multi-ontological approach as offering great possibilities for exploring questions of mind, and opening up otherwise narrow thinking. Thinking widely and cross disciplinarily has come to be a common way of working in the artificial intelligence sciences - and interdisciplinary working has contributed to some of the major advances in the field. One example can be seen in the work of Norbert Wiener, the originator of Cybernetics was a famously interdisciplinary thinker who enjoyed working on what he described as the “boundary regions” of the sciences, often within interdisciplinary teams of scientists from a range of disciplines (McCorduck, 2004). Trained in Mathematics, Wiener teamed up with physiologist Arturo Rosenblueth and Julian Biegelow, a researcher who studying mechanical feedback systems. This intersection of knowledge allowed them to model the central nervous system, proposing the first systems approach to biology.

The field of artificial intelligence science has been marked by numerous breakthroughs and paradigm shifts that have occured when researchers work interdisciplinarily or even across disciplines. But why not expand this interdisciplinarity even wider - introducing philosophies and thought from outside one’s culture and field? One example of expanded thinking about intelligence (both natural and artificial forms) comes in the form of embodied cognition and enactivism. Embodied cognition and enactivist approaches counter the reductionist forms of thinking around ‘mind’ that abstract it from its larger environment and simplify it down to component parts, e.g. brain or neuron. This approach grew out of interdisciplinary collaboration between three researchers: neuroscientist Francisco Varela, psychologist Eleanor Rosch, and comparative philosopher Evan Thompson. In writing their key text The Embodied Mind (1991; re-released 2017), Thompson describes the author’s intent to “create cross-fertilization between cognitive science and the phenomenology of human experience” in response to a cognitive science field which was “dominated by the computer model of the mind” and dismissed the value of lived experience. In a wonderfully multi-ontological way the researchers engaged other philosophical frames - particularly Buddhist philosophy and first-hand accounts of meditation practice from Buddhist practitioners in developing an entirely new approach to concepts of mind and cognition. These expanded notions of mind have had direct applications to AI, presenting an alternative to computationalist approaches.

An open-mindedness that moves us beyond the brain

Thinking multi-ontologically can help us to expand our notions of mind and ideas of intelligence beyond the brain, as well as beyond our own cultures. Within Western science, the jury is still out on where mind and consciousness is actually located. Several non-Western philosophies and sciences see consciousness as being located elsewhere than the brain. Buddhist philosophy sees consciousness, considered the “luminosity of pure awareness” as a non-physical state located neither in the brain nor body. Buddhist philosophy also sees mind and consciousness as operating at a range of levels: the gross (which refers to sensory experience related consciousness dependent upon the brain) and more subtle levels of mind which aren’t physically derived (Thompson, 2015). Another interesting example is the concept of ‘Shen’‘within Taoist philosophy. ‘Shen’ has a complex set of meanings however can be translated simultaneously as mind, soul, or spirit. It is seen as one of the vital energies sustaining life, and rather than being located in the brain, its correlate within Traditional Chinese Medicine is the heart.

Thinking ontologically requires us to ‘get meta’ - to acknowledge our own worldview and mindset by attempting to step out of it, to consider the processes behind how we think, the practices which we use to generate knowledge, and the values and beliefs which inform this. Metaphysical thinking and enquiry can be mind-bending at the best of times, but it’s worth giving a shot now and again if you’re wanting to check your worldview, and the particular cultural biases you may be bringing to perception, understanding and analysis of any particular issue. The realisation that one’s way of viewing the world is simply one amongst many can offer inspiration and creative impetus. Applying genuine creativity to the ways we think about mind and consciousness can help us expand the ways in which we imagine artificial forms of intelligence, develop solutions to challenges in the field and perhaps realise deeper insight about ourselves.

About the Author

|

Ana Tiquia is a cultural producer, futures thinker and strategist who works across art and culture, design, and creative technology. Follow Ana on twitter |

|---|---|

Website

Discuss this Article

Text References

Bocchi, G., Cianci, E., Montuori, A., Trigona, R., (2014). Eureka! The Myths of Creativity. World Futures, 70 (5-6), 276-308, DOI:10.1080/02604027.2014.977073

Kurzweil, R. (1990). The Age of Intelligent Machines.Cambridge, MA: MIT Press

Lama, Dalai. (2003). On the luminosity of being. (Human Nature). New Scientist, 24 May 2003, p. 42.

McCorduck, P. (2004). Machines Who Think. Natick, MA: A K Peters.

Porush, D. (1985). The Soft Machine – Cybernetic Fiction. New York, NY: Methuen

Thompson, E. (2015) Waking, Dreaming, Being. New York, NY: Columbia University Press

Turing, A., (1969). Intelligent Machinery. Machine Intelligence, 5, B. Meltzer and D. Michie, eds. Edinburgh: Edinburgh University Press.